Blog

Beyond the buzz: understanding the AI revolution

Why AI needs to be handled with care to avoid artificial stupidity.

Annelies Verhaeghe

19 July 2023

4 min read

AI is everywhere. OpenAI launched the AI chatbot ChatGPT last November, Microsoft introduced its AI-powered Bing search engine in February, Google launched ‘Bard’ in March, and also Meta responded, revealing its new AI image generation model ‘CM3leon’ last week. The idea is not new, in fact AI has been around since the early 1950s. What is new is the breakthrough of generative AI. This type of AI can learn from past experiences, remember context and make decisions based on that knowledge. In other words, when prompted with the right questions and commands, it can generate new, unique output. So what’s the value, potential drawbacks, but also business opportunities of this AI revolution?

Generative AI: a thinking and creating machine?

Since the 1950s AI has been making its way into almost every aspect of our lives. From the personalised content you see on TikTok and Instagram, to product recommendations on Amazon, or the voice-controlled personal assistant that drives your smart home devices.

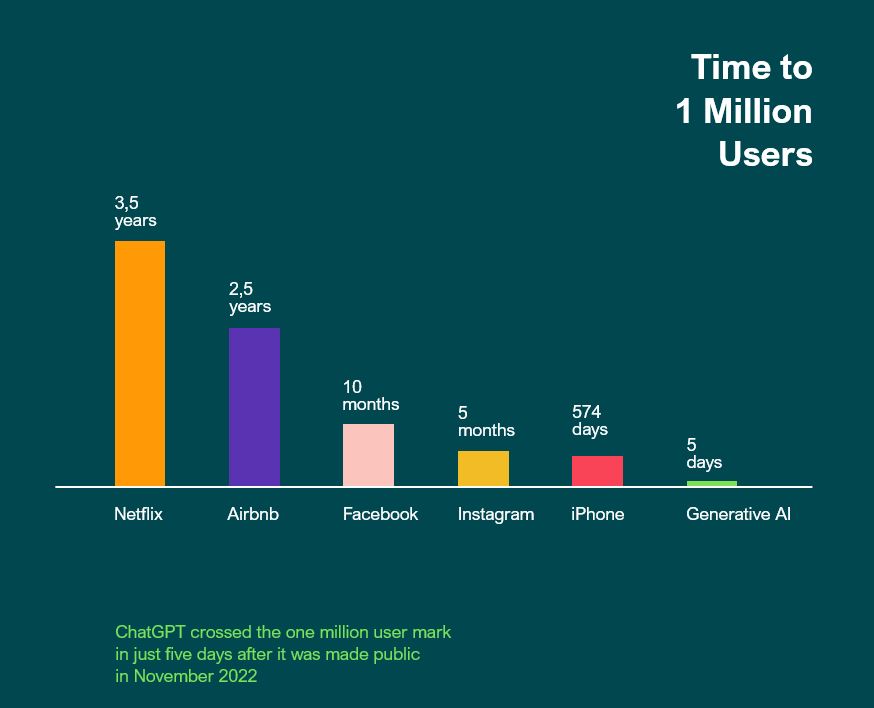

But it’s generative AI that is the true game changer with an unseen adoption rate. ChatGPT, for example, crossed the one million user mark in just five days after it was made public. In comparison, Netflix took 3,5 years, Facebook 10 months and Instagram 5 months.

Generative AI models, such as ChatGPT and Bard, are rightly generating a lot of excitement. They are based on Natural Language Processing (NLP) which enables them to understand and generate human language as well as remember past conversations. Combine this with a dialogue interface and you get a very ‘human-like’ experience when interacting with an AI chatbot. The system can answer almost every question on any subject, hold conversations, and share opinions in real-time. Especially since the machine learns and improves with more interactions, and remembers this information in follow-up conversations, it almost seems like it thinks and creates by itself.

The dark side of AI

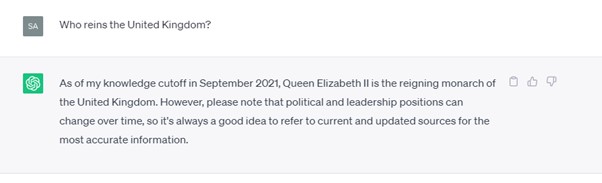

Generative AI systems are only as smart as the data they have been trained on. Since these chatbots rely on ‘older’ data, they cannot answer any question linked to recent events. For ChatGPT this means online content created up until late 2021. If you ask the tool ‘Who reins the United Kingdom?’, you get the below response.

The systems are not only trained on ‘older’ data, but also on data that lacks diversity, resulting in inappropriate or even offensive responses. Several studies show that ChatGPT is biased against certain races and generates misinformation about certain ethnic and social groups. OpenAI’s CEO, Sam Altman, admitted that ChatGPT has “shortcomings around bias”. Also Amazon experienced the limits of AI when experimenting with an AI recruiting tool. It turned out the recruitment engine did not like women. Amazon’s computer models were trained on resumes that were submitted to the company over a 10-year period. And since most of them came from men – reflecting the male dominance across the tech industry – the system learned that male applicants were preferred.

Another watch out when using these tools are so called ‘AI hallucinations’. Since the chatbots are based on rigid statistical models, they can generate factually incorrect or nonsensical information that may look plausible. From simple math problems – according to ChatGPT 8432 * 3391 is 28.580.912 while it is 28.592.912 – to writing false biographies. The latter sparked a trend on social media where people would share their AI generated bios, including prestigious awards they never won, teaching positions at esteemed universities they never held, incorrect hometowns and so on. New York based lawyer, Steven A. Schwartz experienced a very painful incident due to AI hallucinations. Without checking, he used output from ChatGPT to craft a motion. Turned out it was full of fake judicial opinions and legal citations, and he had to explain himself in court.

It becomes hard to distinguish what is real and what not. Google is working on a tool to make it easier to assess the context and credibility of search results since its top results were cluttered with AI generated versions of historic paintings.

This brings us to a final watch out. You need to be very careful about data ownership, making sure you don’t share confidential data and PI information with open AI systems. Samsung already banned employee use of AI tools, fearing that the data stored on external servers is difficult to retrieve and delete, or might even be disclosed to other users. And recently several lawsuits have been raised around copyright issues in relation to tech companies that were training their AI tools.

Handle with care to avoid artificial stupidity

Generative AI is a powerful tool that has many business applications. From enhancing customer support with chatbots and virtual assistants, to supporting your IT department with writing computer code, or generating new ideas for product development. Its use will trickle down into every aspect of everyday business. Coca-Cola, for example, used generative AI to create its ‘Masterpiece’ advertisement where a bottle of coke travels through historic masterpieces to inspire an art student. German biotechnology company Evotec found a new anticancer molecule in just eight months via AI, a process that usually takes up to four or five years.

Also the research industry embraced AI with new tools being launched almost every day. The use cases are endless, from using AI to do desk research, generate survey questions, moderate insight communities, to analyse complex data. But what happens if you create a survey without proper knowledge of how to ask the right questions, or start interpreting data without the right context?

The enthusiasm is valid, but caution is needed. Human power is still necessary to use AI in the correct way. When it falls in the wrong hands artificial intelligence is nothing more than artificial stupidity.

Read more about how we are embracing the AI revolution here.

Let’s connect